The Influence of Feedback in the Simulated Patient Case-History Training among Audiology Students at the International Islamic University Malaysia

Article information

Abstract

Background and Objectives

There is a scant evidence on the use of simulations in audiology (especially in Malaysia) for case-history taking, although this technique is widely used for training medical and nursing students. Feedback is one of the important components in simulations training; however, it is unknown if feedback by instructors could influence the simulated patient (SP) training outcome for case-history taking among audiology students. Aim of the present study is to determine whether the SP training with feedback in addition to the standard role-play and seminar training is an effective learning tool for audiology case-history taking.

Subjects and Methods

Twenty-six second-year undergraduate audiology students participated. A cross-over study design was used. All students initially attended two hours of seminar and role-play sessions. They were then divided into three types of training, 1) SP training (Group A), 2) SP with feedback (Group B), and 3) a non-additional training group (Group C). After two training sessions, the students changed their types of training to, 1) Group A and C: SP training with feedback, and 2) Group B: non-additional training. All the groups were assessed at three points: 1) pre-test, 2) intermediate, and 3) post-test. The normalized median score differences between and within the respective groups were analysed using non-parametric tests at 95% confidence intervals.

Results

Groups with additional SP trainings (with and without feedback) showed a significantly higher normalized gain score than no training group (p<0.05).

Conclusions

The SP training (with/ without feedback) is a beneficial learning tool for history taking to students in audiology major.

Introduction

Training audiology students to be competent in their clinical skills is quite challenging for institutions offering audiology programs [1]. One of the basic clinical skills that needs to be acquired by students in audiology major is effective case-history taking. To obtain an effective case history, the students need professional knowledge that includes the fundamental aspects of hearing and balance, and its related disorders. Additionally, students also have to acquire the necessary communication skills that may include appropriate voice projection, confidence level and body language [2,3].

As a preparation before entering a clinical placement, student audiologists typically learn case-history taking through a seminar training that may include introductory lectures and exercises through role-playing [4]. Role-play is an exercise that involves a student and instructor, in which the student and their fellow friends have the opportunity to take turns acting as an audiologist and a patient [5]. Cases in the role-playing training are typically given on the spot without any prepared script for both actors (audiologist and student). Despite this standard teaching approach, the art of teaching and learning for case-history taking remains a challenge for audiology students [2]. Not only students, experienced audiologists have also reported to have experienced difficulties when trying to obtain an effective case-history; in particular, they have been reported as lacking in professional and patient emotional-relationship [6].

Using a simulated patient (SP), can be an alternative approach for teaching student audiologists the art of case-history taking to resolve the above issue. The SP is someone (either a real patient or a lay individual) who has been provided with sufficient training to act as a real patient, based on specified symptoms or problems for a designated case [4]. The SP has been used in the field of medicine, allied health sciences and nursing to improve the range of students clinical skills and communication skills (for example, during a case-history taking) [7,8]. The SP provides several advantages for the student and instructor. The SP allows a student to repeatedly practice and learn from mistakes upon engagement with the SP [9]. The SP training has been reported to enhance the student’s interpersonal skills, their empathy towards the client and to learn ethics and professionalism [10,11]. For the instructor, the SP can also be used in the examination, because it can provide a relatively standardized and consistent situation for the student [12].

In audiology, the SP has been used for case-history taking training [3,4,13] and training for students to provide counseling or a clinical feedback to the client [14-16]. The use of an SP for case-history taking as related to the scope of this study was only investigated in the literature by Wilson, et al. [13] and Hughes, et al. [4]. Wilson, et al. [13] investigated the use of an SP, role-playing and computer-based simulations (CBS) among 19 audiology students in Australia. The audiology students underwent five weeks of role-playing and CBS training, followed by two sessions of assessment with an SP that included one session of feedback from the instructor. The students’ perceptions of the simulation learning including the SP training were positive, suggesting the potential for implementing a simulation in audiology training. In the Hughes, et al.’s [4] study, the authors evaluated the students’ clinical performance in history taking and delivering audiological feedback using SP and seminar (via role-play) trainings. Cross-over trials were used; initially, the first group underwent SP training and the second group underwent seminar training. Upon the completion of the training and intermediate assessments, both groups reversed their type of training whereby the first group underwent seminar training and the second group underwent SP training. The authors found no significant benefits in using the SP training (considered as high-fidelity simulations) over seminar training (considered as low-fidelity training) and concluded that the use of seminar training was sufficient for training case-history taking among the first-year master students in audiology. Based on their conclusion, it could consider the lower cost of the low-fidelity role-play training in comparison with the high-fidelity SP training. Whilst Hughes, et al.’s [4] findings support the trade-off of training benefit and cost; it can be argued that the quality of learning should always be set as the priority if the cost is permissible.

One of the important components in any simulation exercise is the feedback given to the student [17]. SP training can be conducted with or without any feedback. Feedback can be provided by an instructor or the SP itself [18,19], where both of these approaches have their own strengths and weaknesses. For example, using the SP itself for feedback is advantageous because for one, the cost of recruiting an SP is much cheaper than a clinical instructor. Feedback given by the SP has been reported to enhance the students’ communication skills and their ability to demonstrate gentleness and comfort [19]. The disadvantage is that, the feedback delivered by an SP can be misleading, because the SP is not be properly trained to gauge the competency of the students [18,19]. On the other hand, using SP training with feedback from the instructor can promote, self-reflective learning especially when the self-reflection session is facilitated by a trained instructor [20,21]. Both Hughes, et al. [4] and Wilson, et al. [13] included feedback in their audiology SP training studies. However, both authors did not systematically evaluated whether feedback given by the instructor to a student is beneficial or not when compared to students who are not given any feedback and are self-reflecting on their own strengths and weaknesses.

In medicine, the feedback has been reported to enhance student performance in various students’ simulation trainings [22,23]. To our knowledge, no study has systematically investigated the influence of SP training along with feedback given by the instructor on the audiology students’ case-history taking performance. Therefore, the primary aim of the present study is to determine whether the SP training in addition to the standard role play is an effective learning tool for casehistory taking compared to role play alone. The secondary aim is to ascertain if SP training with feedback given by a clinical instructor is more effective than SP training without feedback.

Subjects and Methods

Subjects

Twenty six (24 female and two male) of the second year undergraduate students in audiology major from the International Islamic University Malaysia (IIUM), Kuantan campus participated. These students have yet to enter their clinical practicum, but have learned the fundamental courses for hearing sciences and basic audiometry. Three clinical instructors with more than five years experience participated in this study. Their role was to assess performance and provide feedback (if applicable) during the SP training. Seven inexperienced actors aged from 23 to 26 years served as SPs in this study. The SPs were undergraduate students from the Faculty of Health Sciences of IIUM that were randomly selected and had volunteered to participate in this study.

Study design

This study protocol has received the unconditional approval from the IIUM Research Ethics Committee (Reference number: 2018-146). The study was conducted during the normal semester of the IIUM academic calendar year in two of the lecture rooms at the Department of Audiology and Speech-Language Pathology, Faculty of Allied Health Sciences. Both of the lecture rooms are well equipped with the facilities for SP and role-play/seminar trainings (network computer, overhead projector, digital projector and audio-system). A mobile video-cam recorder was also used in the study to record the training sessions and assessments.

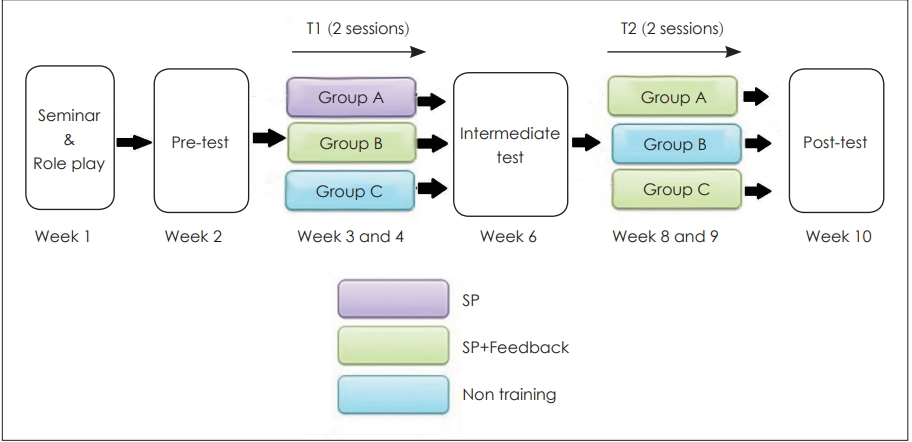

The study began in Week 1 of the semester and ended in Week 10 of the same semester. Fig. 1 summarizes the procedures of the study. The study used a cross-over trial (similar to Hughes, et al. [4]) with two interventions (SP with/without feedback from instructors) and one control group without any additional intervention (with only baseline role-play/seminar training). The cross-over trial would avoid withholding SP training from any of the students, and thus avoid the students being assigned only to the control group throughout the academic year.

Prior to the data collection, the twenty-six students were assigned to three groups, A, B, and C randomly using the ‘Random Team Generator Online’ application. Groups A and B consisted of nine students and Group C comprised of only eight students. Initially, Group A underwent SP training without any feedback from an audiology instructor (hereinafter abbreviated as SP-feedback), Group B underwent SP training with feedback from the audiology instructor (hereinafter abbreviated as SP+feedback), and Group C was the control group with no additional training.

After a pre-test, the initial SP training was conducted in two sessions over a two week period (training 1; T1) following which an intermediate test was conducted. Subsequently, all groups switched their training methodology as follows: Group A and C underwent SP+feedback and Group B now became the control group. After the intermediate test, the training was conducted over two sessions in two weeks (training 2; T2). A post-test was conducted the following week after T2. Table 1 and Fig. 1 summarized the training types of each groups before (T1) and after the cross-over (T2). In general, Groups B and C received single SP+feedback training either before or after the intermediate test, whilst Group A was the only group that received both trainings (SP+feedback and SP-feedback).

Experimental procedures

Baseline (seminar and role-play sessions)

Case-history taking had been learned theoretically in the lecture and in a role-play practical session in Week 1 of the semester prior to the SP training for all students. The instructor (third author) taught the students all the relevant information pertaining to the case-history in a one-hour lecture. Over the next one-hour period, all the students had the opportunity to role-play with the instructor and two tutors. The case used for role-play was an adult with difficulties with hearing because of the background noise and was experiencing tinnitus. No baseline assessment was conducted prior to the seminar and role-play session for any student in this study.

SP training

Seven cases were developed based on the audiology cases obtained from the IIUM Hearing and Speech clinic. All cases were developed based on several areas that encompassed the audiology history taking that included patient concerns on hearing, tinnitus, vertigo, otological history, facial numbness, noise exposure and injuries to head and neck. To ensure appropriate strategies could be implemented by the students during the case-history, each case came with certain attributes of the SP. For example, in some cases, the SP acted as someone who was overly talkative and in another case the SP acted as someone who was relatively quiet and did not wish to speak much. Each of the SP attributes were different from case to case depending on the nature of the case. These seven cases were used for the seven points of training and assessments (for example, three assessments and four sessions of SP trainings). The cases were not randomized throughout the seven points of assessments and trainings. The cases were simply selected by the authors without any systematic criteria; for instance, the case labelled as 1 was used for the pretesting and the case labelled as 2 was used for the first training session and so on. Without proper case randomization, there was the possibility that the student performance (at any point in the assessments/trainings) could be affected by the types of the cases. Although the cases were not randomized across trainings and assessments, all students had the same type of case, regardless of their grouping, in each of their respective assessments and trainings (except those without training).

The cases were vetted by three audiology instructors, including the first and third authors. The seven cases selected by the instructors met the learning outcomes for second-year audiology students where the areas of concern were limited to only basic hearing and balance disorders (complex cases, for example, the auditory processing disorder was excluded). It was a well-established fact that in designing cases for education purposes, the cases are often categorized by their level of difficulties, for example, easy, intermediate or difficult [24]. Because the case-history was aimed at the second year audiology students, not the third or final year students, all of these cases were restricted to a basic level of difficulty. Considering the fact that patient concerns could vary and be unique depending on the case, the instructors only used the actual patient concerns as stated in the patient file to develop the case without adding any extra points beyond the actual case.

Prior to the data collection, the SPs were trained on the personality traits, both body language and emotional, by two semi-professional actors (with an audiology background). The training of the SP was conducted in a few tutorial sessions that included discussions and role plays. Before the study, the trainers were also briefed by the researcher on each of the seven selected cases and the characters that would be acted by the SPs.

To ensure all the SPs would be able to present the case consistently throughout the training sessions with different students, the instructors evaluated all the SPs acting based on a validated acting rubric [25]. The rubric assesses five items (body language, voice, characterization, emotional commitment, and memorization) on a six-point Likert-scale. Only SPs who scored more than half of the total marks were considered ready for the SP training session. The evaluations of the SP were carried out for each of the cases in the same week prior to the SP training or assessment.

In the SP training, each participant was given the basic information of the case-history about the patient and was required to take down the patient’s history in 10 minutes. For the SP+feedback group, the clinical instructor would conduct a feedback session after each student had completed their case-history taking with the SP. In the feedback session, the instructors highlighted both the positive aspects and weaknesses of the student during the session by asking them to self-reflect their previous case-history sessions. The case-history training session was video recorded by the researcher, therefore occasionally the instructor would use the recorded video to promote the self-reflection learning (informed consent was obtained a priori). Only one instructor was assigned to each group and was randomly assigned for each session; therefore, the same instructor might not necessarily train the same student in every session. For a student undergoing only SP training, there was no provision of dedicated feedback; thus, they were encouraged to carry out their own self-reflections without an instructor.

Evaluation of SP training

To our knowledge, Audiology Simulated Patient Interview Rating Scale (ASPIRS) is the only validated tool in the literature that examines the audiology students’ clinical competencies in case-history taking for a SP training [3]. ASPIRS was modified from the original Standardized Patient Interview Rating Scale that was dedicated to evaluating speech pathology student case histories taking with an SP [26]. ASPIRS included six items assessing specific non-verbal communication, interviewing skills, interpersonal skills, professional practice skills, verbal communication, and clinical skills using a 5-point Likert-scale. The first five items in general assessed audiology student communication skills in case history taking (for example, building rapport, proper eye contact, appropriate language etc) and the last items assessed the student abilities in obtaining information from the patient case history. For a detailed review of ASPIRS please refer to Hughes, et al. [3]. This tool consisted of two parts where the first part was used to evaluate the interaction of students with SPs during the casehistory-taking and the second part for evaluation of student interaction with SP when delivering feedback to the patients.

The maximum score for the whole ASPIRS was 60 marks, where 30 marks was allocated for case history taking (6 items×5 points) and 30 marks was allocated for feedback (6 items×5 points). ASPIRS had been validated among 24 pre-clinical audiology students undertaking a Master of Audiology in Australia [3]. Because ASPRIS covers the evaluation for audiology student interactions with SP for case-history taking, we decided to use this tool in this present study. However, only the case-history taking part was used, leaving the maximum total score for each of the ASPIRS assessments to be equivalent to 30 marks (6 items×5 marks) per student.

All students underwent a pre-test after the role-play/seminar session and prior to T1 (Fig. 1). The purpose of the pretesting was to measure the amount of learning the student had acquired at the beginning of the study. Thus, the baseline score for each student would be recorded for further comparisons after the T1 training intervention had been performed. After T1 (using their respective training types), all students completed an intermediate test with a similar procedure to the pre-testing. An intermediate test was deemed necessary, because the training type of each group changed after the test. Hence, the performance after the training needed to be assessed. All the participating students finished their last posttest with a similar arrangement applied in the intermediate and pre-test.

The procedure for the student taking a case history during all of three tests was the same with the SP training, except there was no feedback given to the student. The clinical instructor assessed the student performance for the case-history taking with an SP using ASPIRS immediately during all of the three tests. The clinical instructor was also allowed to review a video recording if they felt it was necessary before finalizing the marks.

Each group had different clinical instructors and SPs, although all groups had the same case (this applied in both the assessment and trainings). The three SPs and the clinical instructors for either training or assessments were randomly assigned to each group. The intention to use one instructor and SP per group was to reduce the acting and assessment times. This step was also to avoid the need for a very long quarantine time among students (to prevent any potential discussions about the cases) and to avoid fatigue amongst both SPs and instructors. These strategies, however, might reduce the reliability of the assessments and trainings, because of the potential bias of using only a single examiner per group and the potential of inter-subject differences among the examiner and SP in both marking and acting, respectively. To counter the potential reduction in the assessment reliability, the researchers had introduced specific training for all SPs to standardize their acting. In addition, a special discussion among the clinical instructors was carried out prior to the data collection to familiarize them with ASPIRS and to standardize the way the examiner assessed items in ASPIRS. Note that, only three of the seven SPs were involved in each of the points of assessment (or training) depending on their availability.

Statistical analysis

A high variation among the students’ baseline training score was identified in the preliminary data analysis. The high variation in baseline scores suggested the potential of a statistical bias caused by the pre-existing state of the student. The initial statistical analysis using the actual ASPRIS score supported this notion, in which conflicting findings were obtained between different sets of variables. To overcome this problem, a normalized gain score was used as an alternative.

The normalized gain score reflects the true “gain” of the learning and is independent of the student baseline score [27]. Using the normalized score, the true gain of learning could be established based on the maximum limit of possible improvement. For ASPIRS, the maximum score of the assessment was 30 marks; therefore, the maximum limit of improvement was equal to the difference between the maximum score and the respective baseline scoring. For each training type, the improvement scores (for the intermediate test and post-test) were calculated for each participating student. The improvement score was then converted to a normalized gain score. For example, the normalized gain score for the post-test would be equal to: (post-testing - intermediate score)/(maximum possible score (30) - intermediate score).

The normalized gain was calculated for the intermediate test (between intermediate and pre-tests) and for the post-test (between post and intermediate tests) for each group. The Kruskal Wallis test was used as a non-parametric alternative to the one-way analysis of variance (ANOVA) at 95% confidence interval (CI) to determine the normalized gain score median differences between the three groups, with the Mann Whitney U-test as the post-hoc analysis. A non-parametric test was used, because of the breach of parametric assumptions that included non-normal distributions of data and the inhomogeneous of variance that could not be solved by transforming data. The Wilcoxon signed rank test at 95% CI was also used to compare the differences in the means of a normalized gain score within each group, in particular, the median score differences between the intermediate test (between intermediate and pre-tests) and the post-tests (between post and intermediate tests).

Results

Comparison of the normalized-gain ASPIRS scores among three group

The ASPIRS case-history median and interquartile range of normalized gain values in the intermediate and post-tests for all the three Groups (A, B, and C) are summarized in Table 2. The last row in Table 2 summarizes the statistical comparisons between group analyses using the Kruskal-Wallis test.

Statistical significant differences were identified in the median normalized-gain ASPIRS scores between the three groups at the intermediate test (p<0.001) and the post-test (p<0.05). Table 3 summarizes the post-hoc findings using the Mann-Whitney U-test. The post-hoc analysis showed a significantly higher normalized gain score at the intermediate test in the group with the additional trainings [Group A (SP-feedback) and B (SP+feedback)] compared to those without additional training (Group C) (p<0.05). The median normalized gain score was also marginally higher in the SP+feedback (Group B) than the SP-feedback group for the same interval (Group A) (p<0.05).

In contrast, the median normalized gain score in the posttest was significantly higher in only one of the two groups of SP+feedback (Group C) compared with those students without any additional training (Group B) (p<0.05). In addition, Groups A and C that were provided similar training (SP+feedback) had no statistically significant differences in their median normalized gain scores (p>0.05).

Comparison of the normalized-gain scores between different training intervals within group

No significant changes in the median normalized gain score were identified by the Wilcoxon signed-rank test for withingroup comparisons between the two training intervals (intermediate versus post-tests) for Groups A and B (p>0.05) as summarized in Table 4. In Group A, no changes in the student performance were identified after the SP+feedback training, as compared to the previous SP-feedback training. In Group B, there were no changes in the student performances after the SP+feedback training (intermediate test) to those without additional training (post-test). For Group C, the median normalized gain score was significantly higher in the SP+feedback training (post-test) to those without additional training (intermediate test) (p<0.05).

Discussion

The primary aim of the present study is to determine whether the SP training in addition to the standard role play is an effective learning tool for case-history taking compared to role play alone. The secondary aim is to ascertain if SP training with feedback given by a clinical instructor is more effective than SP training without feedback.

Some of the findings in the present study in general support the use of SP training (either with or without feedback) for case-history taking, when used in addition to the seminar/role-play training. The outcome when training using SP was generally better than those students without additional training (besides the standard training using seminar/role play). It is worth noting that not all the study findings were consistent with the notion of supporting the use of the SP training. Some examples are the non-significant differences between the group analysis of Group A (SP+feedback) and Group B (no additional training) for the post-test, and within the group analysis for Group B between the intermediate test (SP+feedback) and post-tests (no-additional training). Because the study used a cross-over trial, one cannot rule out a possible interaction between the previous learning experience with the upcoming training technique, especially for students in Group B. We speculate that the students in Group B may have retained some learning from the previous SP+feedback training after the intermediate test. This may be the potential cause of the no significant differences in the group B within group analysis and between the post normalized gain score between Group A and Group B, despite Group B having had no additional training. Having said that, this notion could be only proven with a proper retention effect experiment which was not possible due to time and resource restrictions in our study design. The benefit seen with SP training (with or without feedback) in the current study, in general is consistent with the previous SP studies for case-history taking in audiology [4,13].

Further, we found only small differences in the SP training with feedback and without feedback (based on the betweengroup analysis). The within-group analysis in Group A even shows no significant differences in the normalized gain scores when comparing these two types of training. The lack of significance in these two training types suggests that the SP training without feedback maybe sufficient to train at least second year audiology students for an effective case-history taking. A plausible explanation for this finding could be because of the baseline seminar and role-play training prior to the SP training may be sufficient for them to know, theoretically, the best practice in conducting history taking and in self-reflecting their own case-history taking performance; therefore, they only required SP training for practice without the need for additional feedback from an instructor.

It is also worth noting that the median improvement of the normalized gain case-history score for SP training observed in the present study was only approximately 10 to 40%. Because the SP training was provided to the second-year students (who had yet to enter a real clinical placement and had another two years to graduate), this amount of improvement, although small, is considered as clinically-significant for this group of students.

The findings of the present study are limited to the study participants, facilities, respective years of studies for the students and the SP protocols used. In particular, the present study was conducted during the semester in an active audiology program that restricted the researchers from following a proper randomized-controlled trial experiment. Another limitation of the study is the absence of the baseline assessment prior to the seminar and role-play sessions. This prevents us from understanding the influence of learning that occurred from the previous seminar/role play training. The lack of randomization in the cases for training and assessments could have influenced our results if, for example, a particularly easy case was selected for either the training or assessment. With the easy case, the differences in student performance could be minimal, either with or without the SP training. Finally, there were no systematic evaluations to determine the level of difficulties in each of the selected cases. This step would have been particularly important in the absence of case randomization. As highlighted, all the cases in the present study were set to a basic level of difficulty. Therefore, the lack of significance in some of the findings could be caused by the cases (training or assessment) being so easy that we could not differentiate the outcomes between the various SP training approaches. It may be important to evaluate these factors in future studies for example to investigate the effectiveness of the SP with feedback training, using proper randomized counter trials experiments, to counter-balance the cases among the students and to systematically evaluate the level of difficulties for each of the SP cases. Future studies could be also expanded to determine the effectiveness of using SP with feedback training among 3rd and 4th year students in their clinical year.

In conclusion, SP training is a good learning and training tool for case-history taking in addition to the standard role play and seminar training. However, there was a very marginal effect of integrating feedback in the SP training, at least for 2nd year students who had yet to enter their clinical placement. It should be emphasized that the conclusion of the study is limited to the SP training program conducted in our institution and careful consideration needs to be taken before applying this study conclusion to the other institutions or a different SP program.

Acknowledgements

The authors wish to acknowledge the Ministry of Higher Education of Malaysia through the Fundamental Research Grant scheme (FRGS) (FRGS15-236-0477) and the International Islamic University Malaysia through the Research Initiative Grant Scheme (RIGS) (RIGS 15-035-0035) for funding this research.

Notes

Conflicts of interest:The authors have no financial conflicts of interest.

Notes

Author Contributions

Conceptualization: All authors. Data curation: Maryam Kamilah Ahmad Sani and Ahmad Aidil Arafat Dzulkarnain. Formal analysis: Maryam Kamilah Ahmad Sani and Ahmad Aidil Arafat Dzulkarnain. Funding acquisition: Ahmad Aidil Arafat Dzulkarnain. Investigation: Maryam Kamilah Ahmad Sani and Ahmad Aidil Arafat Dzulkarnain. Methodology: Maryam Kamilah Ahmad Sani, Ahmad Aidil Arafat Dzulkarnain, and Sarah Rahmat. Project administration: Maryam Kamilah Ahmad Sani and Ahmad Aidil Arafat Dzulkarnain. Resources: All authors. Supervision: Ahmad Aidil Arafat Dzulkarnain. Validation: Maryam Kamilah Ahmad Sani, Ahmad Aidil Arafat Dzulkarnain, and Sarah Rahmat. Visualization: All authors. Writing—original draft: Maryam Kamilah Ahmad Sani, Ahmad Aidil Arafat Dzulkarnain, and Sarah Rahmat. Writing—review & editing: All authors.