|

|

- Search

| J Audiol Otol > Volume 24(3); 2020 > Article |

|

Abstract

Background and Objectives

The aim of this study is to evaluate the effect of music training on the characteristics of auditory perception of speech and music. The perception of speech and music stimuli was assessed across their respective stimulus continuum and the resultant plots were compared between musicians and non-musicians.

Subjects and Methods

Thirty musicians with formal music training and twenty-seven non-musicians participated in the study (age: 20 to 30 years). They were assessed for identification of consonant-vowel syllables (/da/ to /ga/), vowels (/u/ to /a/), vocal music note (/ri/ to /ga/), and instrumental music note (/ri/ to /ga/) across their respective stimulus continuum. The continua contained 15 tokens with equal step size between any adjacent tokens. The resultant identification scores were plotted against each token and were analyzed for presence of categorical boundary. If the categorical boundary was found, the plots were analyzed by six parameters of categorical perception; for the point of 50% crossover, lower edge of categorical boundary, upper edge of categorical boundary, phoneme boundary width, slope, and intercepts.

Results

Overall, the results showed that both speech and music are perceived differently in musicians and non-musicians. In musicians, both speech and music are categorically perceived, while in non-musicians, only speech is perceived categorically.

Conclusions

The findings of the present study indicate that music is perceived categorically by musicians, even if the stimulus is devoid of vocal tract features. The findings support that the categorical perception is strongly influenced by training and results are discussed in light of notions of motor theory of speech perception.

Fine-grained auditory discrimination assessed through a continuum of speech tokens typically reveals categorical perception, subject to the phonetic class under study [1]. Categorical speech perception is necessary for retaining perceptual constancy when there exists a variation in multiple acoustic dimensions with respect to the speakerŌĆÖs pitch, tempo, and timbre [2]. Categorical boundaries for speech are reported to emerge early in life [3], but the characteristics of these boundaries are modified by exposure to the native language. It is believed that categorical perception plays a vital role in speech acquisition [3] and in phoneme to grapheme correspondence [4] prerequisite in the development of reading and writing skills.

The idea of the distinction between categorical perception and speech perception is a debatable issue. The proponents of motor theories [5] believe that speech is perceived in a special mode and phonetic units are gated into specialized neural units. However, there is ample evidence that suggests the absence of categorical boundaries in speech continuum and the presence of categorical boundaries in non-phonetic tokens [1,6]. These studies hint at a definite influence of certain factors on the characteristics of perception, other than stimulus associated with the speech versus non-speech category.

A potentially recognizable factor amongst the other, is training in the stimulus contrast under study. In the early years of life, human beings are exposed to various speech contrasts of their native language. As a result, there would be systematic changes in the perceptual characteristics of these contrasts compared to the contrats for which they are not frequently exposed to (for example non speech contrast). Based on the equivocal evidence available for the presence of categorical perception in the speech and non-speech stimuli [6], we hypothesize that non-speech contrasts, if trained, demonstrate characteristics of categorical perception, akin to that of speech tokens.

Music training is known to have a positive influence on human beings. In the auditory domain, musicians are shown to outperform non-musicians in fine-grained discrimination of acoustic signals [7,8], speech perception in noise [9], and temporal processing abilities [10]. Enhanced fine grained discrimination abilities are found in musicians, which could reflect as steeper categorical function in speech discrimination [11-13] support this notion. Musicians have been observed to have a steeper discrimination function in the /a/- /u/ vowel continuum [14] and better discrimination of nonspeech sounds [15].

Indian classical music has various ŌĆ£RagasŌĆØ (scale or melodic framework) that form the basis of all compositions. If one sings a Raga in alapana (Raga sung with sustained /a/), the pitch of /a/ will correspond to the pitch of different ŌĆ£SwarasŌĆØ (notes) in that Raga. According to the theories of vowel normalization [16], alapana of two adjacent swaras (notes) would be normalized and perceived as the same vowel. However, this is unlikely to happen with musicians as they use pitch as the primary cue to discriminate different swaras. Earlier studies [13,17,18] have shown that music is categorically perceived in musicians. Nonetheless, such studies have only analyzed the musicians. The discrimination of alapana of two different swaras shall be influenced by music training and thus, the characteristics of their discrimination must be different between musicians and non-musicians. Such a comparison may throw more light on the acoustic versus phonetic nature of categorical perception.

To further verify the acoustic versus phonetic nature of categorical perception, assessing the perceptual characteristics of instrumental music stimuli between musicians and non musicians would be beneficial. Instrumental music is devoid of vocal tract features and therefore will not involve speech-specific process during its perception. If an instrumental music stimulus is perceived categorically, it would clearly indicate that categorical perception is not a speech-specific phenomenon, and thereby aid in supporting or refuting the inferences of the theories of speech perception accordingly. On the contrary, if the instrumental music is not discriminated categorically, it would suggest that categorical perception is speech specific.

Hence, the purpose of our study is to evaluate the characteristics of discrimination in speech and music through a stimulus continuum in individuals with and without music training. The parameters of categorical perception of speech and music were compared between musicians and non-musicians to analyze at the effect of music training on them.

Static group comparison research design and purposive sampling was used in the study. Fifty-seven adults (male: 33, female: 24) in the age between 20 and 30 years (mean age: 24.4 years) participated in the study. Of them, 30 were musicians, while the rest were non-musicians. Participants in the ŌĆ£musician groupŌĆØ were formally trained in music for a minimum duration of five years (mean duration: 10.13 years). Among the 30 in this group, 17 were vocal musicians, seven were instrumental musicians, and the remaining six were trained in both. Besides, 22 participants from the musician group were actively involved in musical concerts, while the rest reported to have been actively practicing music on a daily basis.

The 27 participants from the non-musician group had neither undergone any formal training in music; nor were habitual listeners of music (they did not listen to any kind of music for more than 3 to 5 hours in a week). Sample size for the study was estimated based on a pilot study (15 participants in each group) for a ŌĆ£╬▓ŌĆØ of 0.20 and ŌĆ£pŌĆØ of 0.05 using the G*Power, version 3.1 software [19] and was found to be 24 in each group.

All the participants were assessed for their normal hearing sensitivity using pure tone audiometry. Their pure tone hearing thresholds were within 15 dB (HL) at octave frequencies between 0.25 and 8 kHz. In immittance audiometry, they had type ŌĆ£AŌĆØ or ŌĆ£AsŌĆØ tympanograms and presence of acoustic reflexes, indicative of normal middle ear functioning. In order to screen out individuals with auditory processing disorders, Screening Checklist for Auditory Processing in Adults (SCAPA) [20] was administered to all the participants and all of them passed the test. All the tests were carried out in a sound treated room where the ambient noise levels were within the permissible limits as per American National Standards Institute, ANSI [21].

An informed written consent was taken from all the participants before their recruitment for the study. The methods conformed to the ethical guidelines stipulated for bio-behavioral research in humans [22], which is in line with the Declaration of Helsinki. The study was also approved by the ethical committee of the Institutional Research Board of All India Institute of Speech and Hearing.

The presence of categorical perception was determined by employing the use of stimulus continua of speech and music stimuli. Stimulus continuum between syllables /da/ (voiced, dental stop consonant) and /ga/ (voiced, velar stop consonant), and between vowels /u/ and /a/ were employed to study categorical perception in speech. Stimulus continuum between alapana of /ri/ and /ga/ of Mayamalavagowla ra:ga (one of the basic Ragas in Carnatic music) and a continuum between these swaras played in the violin were used to study categorical perception in music.

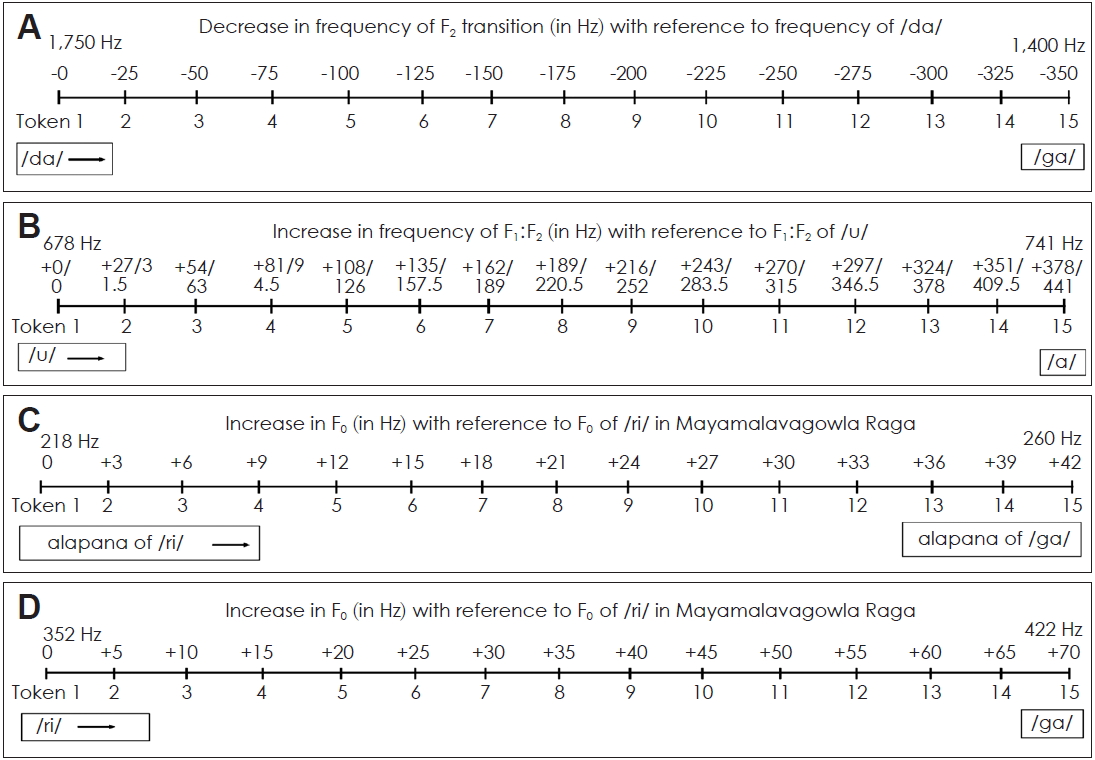

The vowels (/u/ and /a/) and the syllables (/da/ and /ga/) were uttered by an adult female who is a native speaker of Kannada (a Dravidian language, mostly spoken in southern part of India) and the utterances were recorded using a unidirectional microphone kept at 6 cm distance from the mouth. The speaker had normal oro-motor mechanism and normal speech-language abilities. She was instructed to utter the syllables and vowels clearly, at a normal speech rate and in a neutral tone. The recorded stimuli were digitized with a sampling rate of 44,100 Hz and stored in a personal computer. The recorded samples were spectrally analyzed by a speech-language pathologist with an experience of 15 years in acoustic analysis. Using Computer Speech Lab (CSL 4,300) software (Kay Elemetrics, Lincoln Park, NJ, USA), formant transition (onset and offset) of second formant frequency (F2) in the syllables (/da/ and /ga/), and first and second formant frequencies in vowels (/u/ and /a/) were identified. Using analysis by synthesis method in the CSL software, frequency of F2 transition was altered along the /da/ to /ga/ continuum and frequency ratio of first and second formats was altered along the /u/ to /a/ continuum. In the /da/ to /ga/ stimulus continuum, onset of second formant frequency was morphed from 1,750 Hz to 1,400 Hz in steps of 25 Hz to generate 15 tokens. Duration of each token was 300 ms. Fig. 1A represents the 15 tokens of /da/ to /ga/ continuum used in the study. Similarly, the difference between F1 and F2 was varied from 678 Hz to 741 Hz to obtain 15 tokens of /u/ to /a/ continuum. F1 and F2 were morphed in steps of 27 Hz and 31.5 Hz (thereby the ratio in steps of 4.5 Hz) respectively to synthesize the vowel continuum. Duration of each token was 300 ms. Fig. 1B represents the 15 tokens of vowel /u/ to /a/ continuum used in the study.

In order to generate the continuum of vocal music tokens, a female professional singer was instructed to sing alapana of the ascending notes of Mayamalavagowla ra:ga, which was recorded using a unidirectional microphone kept at 6 cm distance from the mouth. The recording was digitized with a sampling rate of 44,100 Hz and stored in a computer. The recorded samples were spectrally analyzed using Praat software (version 5.3.55; https://www.praat.org) to identify the alapana of /ri/ and /ga/. These were then sliced from the original files and stored as two separate files. At the steady state of these two swaras, the sample was analyzed for fundamental frequency (F0). The F0 was morphed from 218 Hz (F0 of /ri/) to 260 Hz (F0 of /ga/) in the steps of 3 Hz to obtain 15 tokens of /ri/ to /ga/ vocal music continuum. Duration of each token was 300 ms. Fig. 1C represents the 15 tokens of /ri/ to /ga/ vocal music continuum of the study.

Further, for the generation of the stimulus continuum of instrumental music, a professional violinist was made to play arohana of Mayamalavagowla Raga. The auxiliary output (sound port) of the violin was routed directly to a computer and the output was saved in the computer. The violin output was recorded at a sampling rate of 44,100 Hz with 16-bit resolution. The recorded sample was analyzed spectrally using Praat software to identify the /ri/ and /ga/ swaras and these were cut from the original file and saved as separate files. At the steady state of these swaras, the sample was analyzed to note down their F0. The F0 was morphed from 352 Hz (F0 of /ri/) to 422 Hz (F0 of /ga/) in the steps of 5 Hz to obtain 15 tokens of /ri/ to /ga/ instrumental music continuum. Duration of each token was 300 ms. Fig. 1D represents the 15 tokens of instrumental music continuum from /ri/ to /ga/.

The participants were tested for their ability to identify the tokens. Each participant was independently tested by presenting the stimulus tokens to their right ear. The stimuli were presented using E-Prime (Psychology software tools, Sharpsburg, MD, USA) software and were delivered to the ear via Sennheiser PC 320 headphones. All the participants were tested for identification of tokens of all the four stimulus types (syllable, vowel, vocal music and instrumental music). Each token of an individual stimulus type was presented 10 times in a random order (10 presentations├Ś15 tokens=150 stimuli per stimuli type). Each stimulus type was tested in a separate block. The participants were instructed to identify the stimulus that was heard (syllables, vowel or swara depending on the stimulus type being tested) in a two-alternative forced-choice method and indicate the same by pressing a computer key. The corresponding responses were saved in the E-prime software (Psychology software tools). The approximate time taken to complete the whole test was 25 minutes and it was carried out in a single session with breaks of not more than 5 minutes. The break was given only if warranted.

The identification scores in each stimulus type were plotted against the corresponding stimulus token, and the resultant plots were analyzed qualitatively as well as quantitatively. Separate plots were constructed for each participant. The quantitative measures determined were 50% crossover, lower edge of categorical boundary (LECB), upper edge of categorical boundary (UECB) and phoneme boundary width (PBW). Fig. 2A represents the measures noted down in a representative plot with single cross over. The 50% crossover is the token at which 50% identification scoresis obtained. Often, it is the token where two curves meet (token 9 as shown in the representative plot). LECB is the token at which 80% identification scores are obtained (token 8 as shown in the representative plot). UECB is the token at which 20% identification scores is obtained (token 10 as shown in the representative plot). PBW is just the difference between the LECB and UECB (2 as shown in the representative plot). These parameters have been shown to be effective in delineating the differences in the perception of stimulus in a continuum in earlier studies [23-25]. Hence they were considered for the quantitative analysis in this study. Further, a linear regression was used to fit the identification curve from the point of 80% identification to 20% identification to estimate the slope and intercept.

The qualitative analysis included noting down the morphology (nature of the identification curves) of the plots in terms of multiple crossovers (identification curves converge multiple times), no crossovers (identification curves do not converge) and single crossover (identification curves converge once). Examples of these patterns are depicted in Fig. 2B-D respectively.

The parameters of categorical perception (50% crossover, LECB, UECB, PBW, slope, and intercepts) were derived from individual response plots of the participants for the four stimulus types. The data were tabulated and statistically analyzed in Statistical Package for the Social Sciences software (SPSS, version 21) (IBM Corp., Armonk, NY, USA). The data distribution was assessed using Shapiro-WilkŌĆÖs test of normality which showed that most of the parameters had non-normal distribution.

In the perception of syllables, all participants in the two groups showed single crossover. This allowed estimation of parameters of categorical perception in all the fifty-seven participants (30 musicians and 27 non-musicians). Fig. 3A shows the median and interquartile range of identification score of the two syllables along the stimulus continuum in non-musicians and musicians. Comparison of plots of the two groups shows that 50% crossover occurred earlier, and the slope of the plot was steeper in musicians compared to non-musicians.

The median and interquartile range of the 50% crossover, LECB, UECB, PBW, slope, and intercept of the two groups of participants obtained for perception of syllables are given in Table 1. Results of comparison of the two groups using Mann-Whitney U test (Z & p-values) are given in the Table 2. The results showed that musicians had a significantly higher slope but considerably lower 50% crossover, UECB, and PBW compared to non-musicians. There was no significant difference between the two groups in their LECB and intercept.

The individual plots of vowel identification showed single crossover in all the 57 participants. Fig. 3B shows the median and interquartile range of identification score along the vowel continuum in musicians and non-musicians. The median and interquartile range of the 50% crossover, LECB, UECB, PBW, slope, and intercept are given in Table 3. The results of the comparison of these parameters using Mann-Whitney U test (Z and p-values) are given in the Table 2. Results revealed that none of the parameters were significantly different between the two groups.

The individual plots representing identification of swara (/ri/ and /ga/) along their continuum did not show categorical boundary in any of the non-musicians. On the other hand, all the participants in the musician group showed single crossover in the perception of vocal music continuum. Fig. 3C shows the median and interquartile range of identification scores obtained by musicians and non-musicians for the perception of /ri/ and /ga/ in the vocal music continuum. The median and interquartile range of the 50% crossover, LECB, UECB, PBW, slope, and intercept, for the musician group are given in Table 4.

Similarly, the perception of vocal music continuum, plots of identification of instrumental /ri/ and /ga/ along the continuum showed no definite categorical boundary in any of the non-musicians. On the contrary, all the musicians showed single crossover in the perception of instrumental music tokens.

Similarly, the perception of vocal music continuum, plots of identification of instrumental /ri/ and /ga/ along the continuum showed no definite categorical boundary in any of the non-musicians. On the contrary, all the musicians showed single crossover in the perception of instrumental music tokens. Fig. 3D shows the median and interquartile range of identification scores /ri/ and /ga/ obtained by musicians and non-musicians in their instrumental music continuum. The median and interquartile range of the 50% crossover, LECB, UECB, PBW, slope, and intercept, for the musician group are given in Table 4.

Overall, the results revealed that both speech and music are perceived differently in musicians compared to non-musicians. In musicians, both speech and music are categorically perceived, whereas in non-musicians, only speech is perceived categorically.

The study used syllables and vowels to assess the characteristics of categorical perception in speech. Like the earlier studies [11-13], prominent categorical boundaries were witnessed in /da/ to /ga/ continuum when the F2 transition was systematically reduced in steps of 25 Hz across the 15 tokens of continuum. The presence of categorical boundary supports the presence of categorical perception in this stimulus pair and this was true in musicians as well as non-musicians. However, the 50% cross-over occurred earlier and the stimulus-response function was steeper in musicians compared to non-musicians. This shows that music training influences the categorical perception of speech which appears to be secondary to the improved fine-grained discrimination seen in musicians [7,8]. The findings are in sync with the observations from previous studies [11-13], wherein steeper stimulus-response function is reported in musicians.

However, there was no significant difference in the parameters of categorical perception of vowels between musicians and non-musicians. This suggests that the influence of music training on the categorical perception of speech depends on the phonetics of speech and does not uniformly enhance perception of all classes of phonemes. One can speculate that transient versus sustained nature of the phonemes is a confounding variable while determining the influence of music training on speech perception.

Unlike speech perception, during the perception of music tokens, none of the non-musicians were found to have categorical boundary in the continuum. However, all the musicians showed a clear categorical boundary in the perception of vocal as well as instrumental music tokens. The presence of categorical function in the perception of music by musicians is concurs with the findings of the previous studies [13]. While the earlier studies had used either musical tones or instrumental music [13,26], the current study used both. The two types of tokens differed in their vocal tract features. The vocal music tokens had features of vocal tract, whereas the instrumental music was devoid of such features. Nonetheless, both types of music tokens revealed similar findings. Owing to their formal training, musicians are sensitive to pitch variations and hence, the difference in pitch was of relevance to them during the perception of vocal music. However, in the case of nonmusicians, the change in pitch was not linguistically relevant. Therefore, the linguistic relevance appears to play an important role in determining the perceptual function.

The difference in the perception of music tokens between musicians and non-musicians suggests that training strongly determines the characteristics of perception. Based on the information-processing model [27], the occurrence of categorical boundary in music perception could be viewed as a consequence of the musician either perceiving the stimulus ŌĆ£speechlikeŌĆØ or finding the stimulus as being linguistically relevant. Either of the two conditions could be attributed to the years of music training that the musicians undergo. The F0 of the music tokens were systematically varied in the study and musicians are reported to have better fine-grained abilities in identifying pitch changes [7,8]. Pitch being the primary cue in the identification of Raga (scale), the musiciansŌĆÖ auditory system appears to be wired for the accurate and categorical perception of adjacent ŌĆ£swarasŌĆØ (notes). Such an ability is not witnessed in non-musicians. It is also important to note that this perceptual advantage in musicians is present in vocal as well as instrumental music and is not dependent on the presence or absence of vocal tract features.

Taken together, the findings suggest that categorical perception is not restricted to stimuli, inclusive of vocal tract features and that instrumental music stimuli can also be perceived categorically. This refutes the assumptions of the motor theory that ŌĆ£speech is perceived in a special modeŌĆØ and the phonetic aspects of the stimulus are not necessary for the perceptual function to demonstrate categorical boundary.

In conclusion, musicians demonstrate categorical boundary in the perception of speech as well as music stimuli, whereas non-musicians have categorical boundary only in the perception of speech stimulus. The findings of the study suggest that categorical boundary is not determined by the acoustic versus phonetic nature of the stimulus. Rather, it is influenced by the linguistic relevance of the stimulus, which in turn is determined by training.

Acknowledgments

We would like to thank the All India Institute of Speech and Hearing, Mysuru, for providing the necessary infrastructure and facilities to carry out the research. We would extend our sincere gratitude to all the participants of the study for their time and cooperation. No external source of funding was received to carry out the study.

Notes

Author Contributions

Conceptualization: all authors. Data curation: Yashaswini L. Formal analysis: Yashaswini L. Investigation: Yashaswini L. Methodology: all authors. Supervision: Sandeep Maruthy. Validation: Sandeep Maruthy. WritingŌĆöoriginal draft: Yashaswini L. WritingŌĆöreview & editing: Sandeep Maruthy. Approval of final manuscript: all authors.

Fig.┬Ā1.

Stimulus continuum of four stimulus types. Fifteen tokens of the /da/ to /ga/ continuum with a systematic change in the onset of second formant frequency (A), /u/ to /a/ continuum with a systematic change in F1/F2 (B), vocal /ri/ to /ga/ continuum (C) with a systematic change in F0 and instrumental /ri/ to /ga/ continuum (D) with a systematic change in F0.

Fig.┬Ā2.

A: Representative plots depicting the parameters of categorical perception: 50% crossover (a), lower edge of categorical boundary (b), upper edge of categorical boundary (c), and difference between lower and upper edge of categorical boundary, phoneme boundary width (d). B-D: Representative plots having multiple crossover, no crossover, and single crossover respectively. Maximum score is 10.

Fig.┬Ā3.

Median and interquartile range of identification score of the /da/ and /ga/ syllables (A), /u/ and /a/ vowels (B), vocal music /ri/ and /ga/swaras (C) and instrumental music /ri/ and /ga/ swaras (D) along their continuum in non-musicians and musicians. Maximum score is 10.

Table┬Ā1.

Median and interquartile range of the 50% crossover, LECB, UECB, PBW, slope and intercept, for the perception of syllables /da/ and /ga/ in their continuum in musicians and non-musicians (n=57)

Table┬Ā2.

Results of the comparison of parameters using Mann-Whitney U test (Z & p-values) between musicians and non-musicians for the syllable and vowel stimuli continuum

| Parameters | 50% crossover | LECB | UECB | PBW | Slope | Intercept |

|---|---|---|---|---|---|---|

| Syllable | ||||||

| ŌĆāZ | -3.773 | -1.345 | -4.590 | -5.239 | -3.325 | -0.856 |

| ŌĆāp | ’╝£0.01* | 0.179 | ’╝£0.01* | ’╝£0.01* | 0.01* | 0.392 |

| Vowel | ||||||

| ŌĆāZ | -0.959 | -1.531 | -0.250 | -1.206 | -1.290 | -0.232 |

| ŌĆāp | 0.338 | 0.126 | 0.803 | 0.228 | 0.197 | 0.218 |

Table┬Ā3.

Median and interquartile range of the 50% crossover, LECB, UECB, PBW, slope and intercept, for the perception of vowels /u/ and /a/ continuum in musicians and non-musicians (n=57)

Table┬Ā4.

Median and interquartile range of the 50% crossover, LECB, UECB, PBW, slope and intercept for the perception of vocal and instrumental music stimulus continuum in musicians (n=30)

REFERENCES

1. Gerrits E, Schouten ME. Categorical perception depends on the discrimination task. Percept Psychophys 2004;66:363ŌĆō76.

2. Prather JF, Nowicki S, Anderson RC, Peters S, Mooney R. Neural correlates of categorical perception in learned vocal communication. Nat Neurosci 2009;12:221ŌĆō8.

3. Eimas PD, Siqueland ER, Jusczyk P, Vigorito J. Speech perception in infants. Science 1971;171:303ŌĆō6.

4. Mody M, Studdert-Kennedy M, Brady S. Speech perception deficits in poor readers: auditory processing or phonological coding? J Exp Child Psychol 1997;64:199ŌĆō231.

5. Liberman AM, Mattingly IG. The motor theory of speech perception revised. Cognition 1985;21:1ŌĆō36.

6. Jusczyk PW, Rosner BS, Cutting JE, Foard CF, Smith LB. Categorical perception of nonspeech sounds by 2-month-old infants. Percept Psychophys 1977;21:50ŌĆō4.

7. Micheyl C, Delhommeau K, Perrot X, Oxenham AJ. Influence of musical and psychoacoustical training on pitch discrimination. Hear Res 2006;219:36ŌĆō47.

8. Deguchi C, Boureux M, Sarlo M, Besson M, Grassi M, Sch├Čn D, et al. Sentence pitch change detection in the native and unfamiliar language in musicians and non-musicians: behavioral, electrophysiological and psychoacoustic study. Brain Res 2012;1455:75ŌĆō89.

9. Oxenham AJ, Fligor BJ, Mason CR, Kidd G Jr. Informational masking and musical training. J Acoust Soc Am 2003;114:1543ŌĆō9.

10. Rammsayer T, Altenm├╝ller E. Temporal information processing in musicians and nonmusicians. Music Percept 2006;24:37ŌĆō48.

11. Bidelman GM, Moreno S, Alain C. Tracing the emergence of categorical speech perception in the human auditory system. Neuroimage 2013;79:201ŌĆō12.

12. Bidelman GM, Weiss MW, Moreno S, Alain C. Coordinated plasticity in brainstem and auditory cortex contributes to enhanced categorical speech perception in musicians. Eur J Neurosci 2014;40:2662ŌĆō73.

13. Burns EM, Ward WD. Categorical perception--phenomenon or epiphenomenon: evidence from experiments in the perception of melodic musical intervals. J Acoust Soc Am 1978;63:456ŌĆō68.

14. Bidelman GM, Alain C. Musical training orchestrates coordinated neuroplasticity in auditory brainstem and cortex to counteract age-related declines in categorical vowel perception. J Neurosci 2015;35:1240ŌĆō9.

15. Wu H, Ma X, Zhang L, Liu Y, Zhang Y, Shu H. Musical experience modulates categorical perception of lexical tones in native Chinese speakers. Front Psychol 2015;6:436

17. Cutting JE, Rosner BS. Categories and boundaries in speech and music. Percept Psychophys 1974;16:564ŌĆō70.

18. Siegel JA, Siegel W. Absolute identification of notes and intervals by musicians. Percept Psychophys 1977;21:143ŌĆō52.

19. Faul F, Erdfelder E, Lang AG, Buchner A. G*Power 3: a flexible statistical power analysis program for the social, behavioral, and biomedical sciences. Behav Res Methods 2007;39:175ŌĆō91.

20. Vaidyanath R, Yathiraj A. Screening checklist for auditory processing in adults (SCAP-A): development and preliminary findings. J Hear Sci 2014;4:33ŌĆō43.

21. American National Standard Institute. (R2008) AS 1-1999. Maximum permissible ambient noise levels for audiometric test rooms. New York, USA: American National Standards Institute, Inc.;1999.

22. Venkatesan S. Ethical guidelines for bio-behavioral research. Mysuru, India: All India Institute of Speech and Hearing;2009.

23. Doughty JM. The effect of psychophysical method and context on pitch and loudness functions. J Exp Psychol 1949;39:729ŌĆō45.

24. Powlin AC. Awareness and attitude towards stuttering among normal school going children [dissertation]. Karnataka: University of Mysore;2006.

25. Powlin AC. Effect of temporal and spectral variations on phoneme identification skills in late talking children [dissertation]. Karnataka: University of Mysore;2009.

26. Pastore RE, Schmuckler MA, Rosenblum L, Szczesiul R. Duplex perception with musical stimuli. Percept Psychophys 1983;33:469ŌĆō74.

27. Cutting JA, Pisoni DB. An information-processing approach to speech perception. In: Kavanagh JF, Strange W. editors. Implications of basic research in speech and language for the school and clinic. Cambridge: MIT Press;1978.